VidNum1.4K - A Comprehensive Benchmark for Video-based Numerical Reasoning

This research introduces VNum, a comprehensive VideoQA benchmark containing 1,379 human-annotated video-question pairs designed to test multi-step numerical reasoning in Vision-Language Models (VLMs). Moving beyond simple counting, VNum spans diverse real-world environments to quantify objects, actions, and events through a unique three-level hierarchy.

Apr 3, 2026

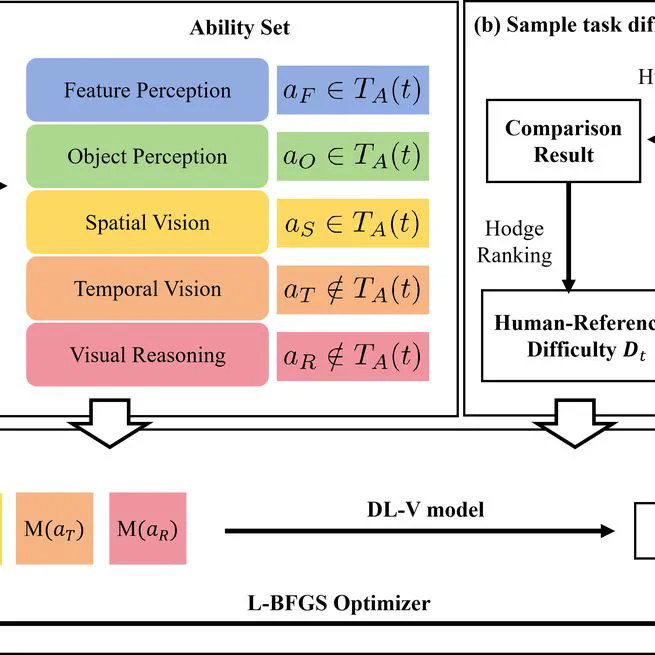

Task Ability Decomposition and Difficulty Quantification of Visual Tasks for AGI Evaluation

First systematic exploration of task-ability space structure and its link to task difficulty. Proposed TADDL-V framework for quantifying visual task difficulty and released AGI-V70 benchmark for AGI evaluation.

Oct 27, 2025